Prompt Engineering Guide 2025: How to Write Better Prompts for ChatGPT

Prompt engineering is now one of the most valuable skills for professionals using ChatGPT and other AI creation tools. In 2025, it’s no longer a clever trick or temporary trend, it’s a systematic method for producing precise, creative, and trustworthy results from large language models.

As these systems advance, the best prompt engineers are moving beyond simple phrasing and learning to apply structure, logic, and reasoning scaffolds. The most advanced prompt engineering techniques focus on clarity, controlled output, and repeatable success across models.

After completing a prompt engineering course and spending months testing real-world applications, I realized how quickly best practices change. This guide on prompt engineering in 2025 brings together the most effective techniques, ChatGPT-specific strategies, and lessons learned from real-world use.

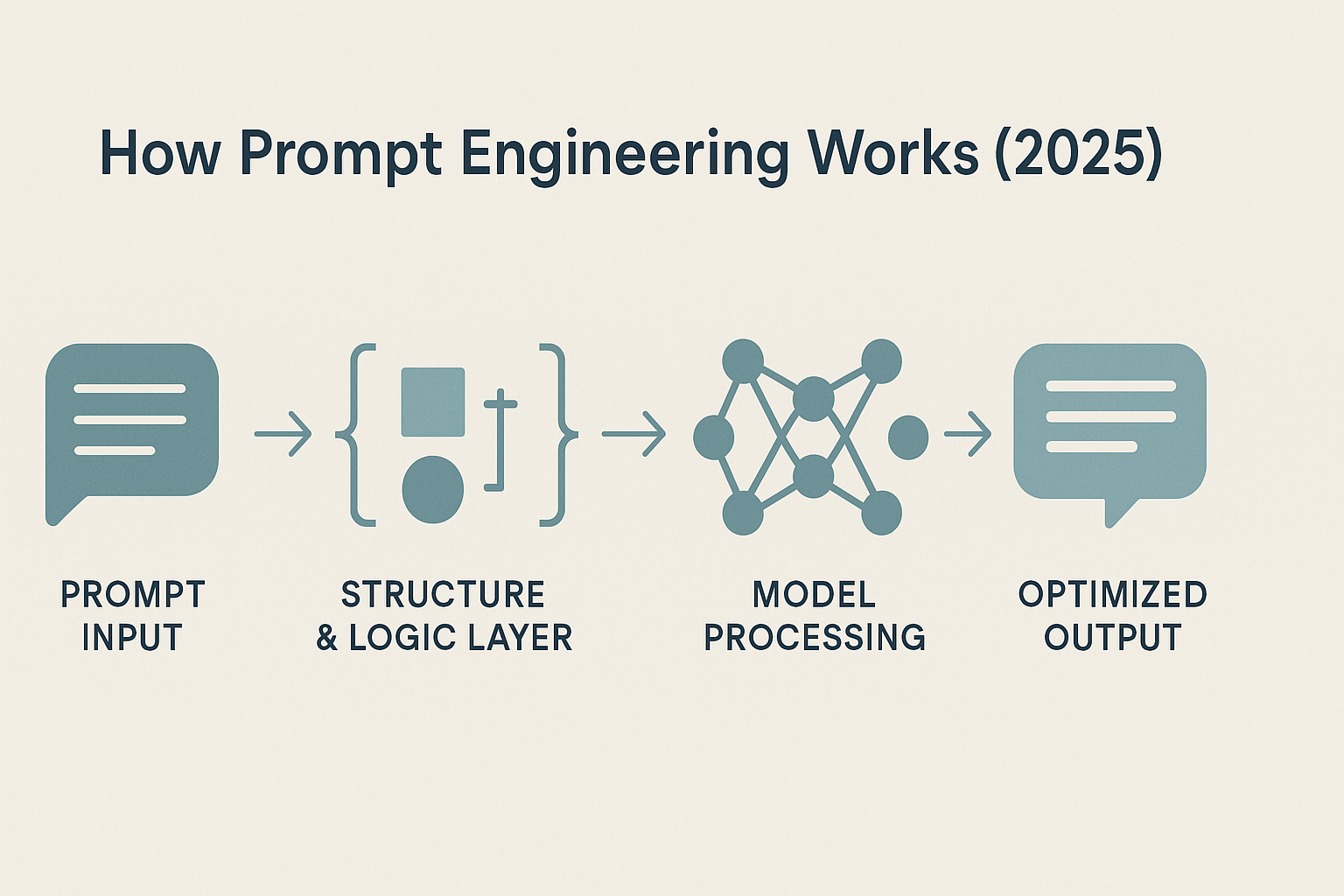

How Prompt Engineering Works in 2025

To understand prompt engineering best practices in 2025, it helps to look at how prompts actually shape model behavior. Each time you write a prompt, the model is not simply reacting to words. It is interpreting structure, tone, and logical flow to predict what should come next.

At its core, a prompt is simply the text or instruction you give an AI to guide its response. What makes prompt engineering different is the method behind that text, the way you structure information, define roles, and control context to produce a consistent outcome.

The best prompt engineers treat this process like system design. They test, measure, and refine to reach consistent results. A high-quality prompt is not just a good guess; it is the result of structured thinking that balances clarity, context, and constraints.

Modern models have become increasingly sensitive to how you ask, not just what you ask. A vague request can leave the model uncertain, while a clear, role-based instruction can anchor its reasoning and lead to more accurate, reproducible outputs.

This evolution is why effective prompting now feels closer to programming than to simple writing. It is about understanding the model’s reasoning process, guiding it step by step rather than hoping for the right outcome. That shift marks the biggest change in prompt engineering best practices for 2025. Success now depends less on clever phrasing and more on strategic structure.

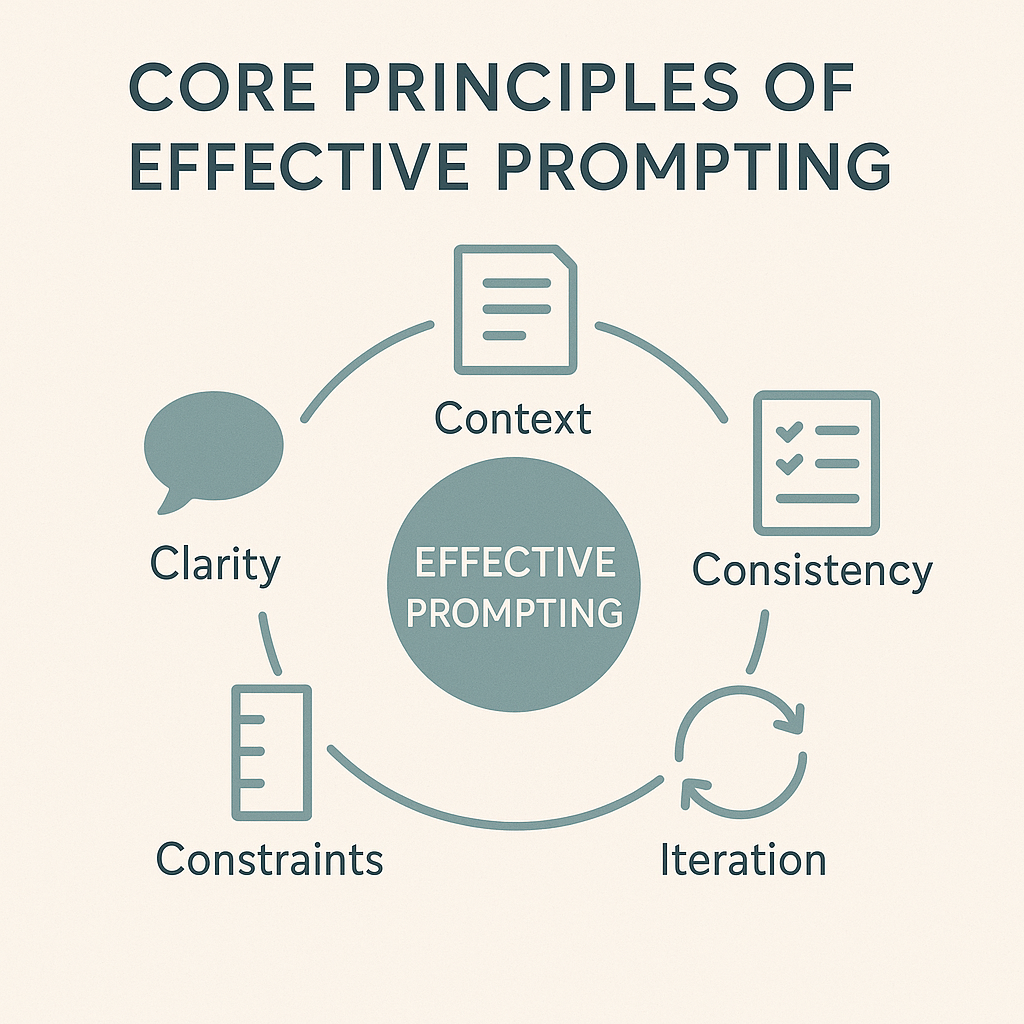

5 Core Principles of Effective Prompting

Before diving into specific prompt engineering best practices for 2025, it helps to understand what makes a prompt effective. A well-constructed prompt gives ChatGPT a clear task, relevant context, and a defined output structure. Even small adjustments to phrasing or layout can significantly change the accuracy and usefulness of the response.

Research in 2025 consistently shows that clarity, context, and specificity remain the most predictive factors for high-quality results when working with advanced LLMs.

- Clarity Over Creativity

- Context Is the Control Mechanism

- Consistency Creates Reliability

- Constraints Improve Quality

- Iteration Is the Real Skill

1. Clarity Over Creativity

The clearer the prompt, the better ChatGPT performs. Avoid unnecessary adjectives, layered requests, or emotional phrasing that makes intent harder to interpret. Direct language leads to more accurate results, which is why clarity remains one of the core prompt engineering best practices in 2025.

Example: How Clarity Changes Results

| Prompt Type | Example Prompt | Likely Output |

|---|---|---|

| ❌ Unclear | Write something inspiring about productivity. | Generic motivational advice with no focus or structure. |

| ✅ Clear | Write a 50-word motivational paragraph about productivity for remote workers. | Focused, concise, and directly relevant to the intended audience. |

Takeaway: Specific structure and audience cues help the model produce sharper, more useful results.

Quick Tip: If you can’t summarize your prompt in one sentence, rewrite it until you can. Simplicity improves accuracy more than length.

2. Context Is the Control Mechanism

Models perform best when they understand their role and the boundaries of the task. Supplying clear context such as “You are a data analyst” or “You are an academic editor” immediately improves accuracy, reasoning, and relevance. From my experience, this is one of the most effective ways to reduce ambiguity and guide ChatGPT toward more reliable outputs. Strong contextual framing remains a core prompt engineering best practice because it narrows interpretation and reduces errors.

Example: How Context Changes the Output

| Prompt Type | Example Prompt | Likely Output |

|---|---|---|

| ❌ No Context | Summarize this report. | A vague or incomplete summary that misses key details. |

| ✅ With Context | You are a financial analyst. Summarize this quarterly report for a management audience in three bullet points. | Focused analysis emphasizing financial trends and executive-level insights. |

Takeaway: Context tells the model how to think, not just what to do. Defining a role and audience aligns tone, depth, and focus with your goals.

💡 Quick Tip: Start every prompt with a short role statement, even for creative tasks. It anchors the model’s reasoning from the first token.

3. Consistency Creates Reliability

If you ask for the same task in different ways each time, the results will vary. Prompts work best when they follow a steady pattern. Using consistent instructions, roles, and formatting makes the responses more predictable, because the system knows exactly what structure you expect. From my own experience, keeping this consistency is one of the simplest ways to reduce randomness and get higher quality results.

| Prompt Type | Example Prompt | Outcome |

|---|---|---|

| ❌ Inconsistent | “Write a marketing email.” / “Can you make a short ad?” / “Draft something to promote this product.” | Each version produces a different tone, length, and style. |

| ✅ Consistent | “You are a marketing copywriter. Write a 100-word promotional email for [product] using a friendly, persuasive tone and ending with a clear call-to-action.” | Predictable tone, length, and structure across multiple prompts. |

Takeaway: Consistency is less about repetition and more about pattern discipline. When the model knows what you expect (same roles, same phrasing, same output logic) it learns to deliver higher quality with less trial and error.

Quick Tip: Build reusable templates for recurring tasks (e.g., blog outlines, product descriptions, summaries). Adjust the content, not the framework.

4. Constraints Improve Quality

Boundaries consistently produce better results. When you specify word limits, tone, formatting, or the number of steps you want, the model stays focused and avoids drifting into irrelevant detail. Clear constraints reduce ambiguity and make the output far more accurate. In my experience, adding even a simple requirement such as “three bullets only” or “keep it under one paragraph” can dramatically improve quality.

In fact, you’ve likely noticed that every example in this guide already uses constraints, whether it’s a defined role, a set structure, or a limit on words or format. That’s intentional. Constraints act as invisible scaffolding that helps the model stay logical, consistent, and aligned with your expectations.

Example: How Constraints Sharpen Output

| Prompt Type | Example Prompt | Output Style |

|---|---|---|

| ❌ No Constraints | “Explain how neural networks learn.” | Long, unfocused explanation with uneven technical depth. |

| ✅ With Constraints | “Explain how neural networks learn in under 100 words, written for a high school student.” | Focused, clear, and audience-appropriate response. |

Takeaway: Constraints don’t restrict creativity, they give it form. The best prompt engineering practices in 2025 rely on constraint-based design to make AI outputs sharper, faster, and easier to control.

Quick Tip: Build constraints into your prompts by default. Even a short limit like “three examples max” or “use Markdown headings” improves clarity and repeatability.

5. Iteration Is the Real Skill

Prompt engineering is not about finding one perfect formula. It is about refining and testing prompts, tracking what works, and adapting your strategy as models evolve.

The best practitioners treat prompting like an experimental process. They build, test, and fine-tune in small steps until the model produces the level of precision or creativity they’re aiming for.

Example: Iteration in Action

| Version | Prompt | Outcome |

|---|---|---|

| One | “Write a social media caption for a travel photo.” | Generic and repetitive. |

| Two | “Write a short, engaging caption for a travel photo showing mountains at sunrise.” | More vivid but inconsistent tone. |

| Three | “You are a travel blogger. Write a 20-word caption for a sunrise mountain photo that feels personal and inspiring.” | Focused, consistent, and emotionally resonant output. |

Takeaway: Iteration is the real differentiator between casual users and skilled prompt engineers. Each test teaches you how the model responds, helping you shape inputs that consistently produce your desired result.

💡 Quick Tip: Save your best-performing prompts and small improvements in a personal prompt library. Over time, you’ll develop reliable templates that evolve with the models.

Advanced Prompt Engineering Techniques (2025 Edition)

Once the core principles are in place, the next step is applying them through practical techniques. The methods below reflect what consistently works in 2025, supported by current research and real-world testing. They move beyond simple prompting and give you more control, higher accuracy, and more predictable results when working with ChatGPT or any large language model.

- Zero-Shot and Few-Shot Prompting

- Role-Based Prompting

- Chain-of-Thought Reasoning

- Format and Output Constraints

- Combining Techniques for Complex Tasks

Each technique functions as a building block you can mix, stack, and adapt depending on the task. The stronger your technique library becomes, the more confidently you can guide the model and produce high-quality outputs.

1. Zero-Shot and Few-Shot Prompting

Zero-shot prompting gives the model a task without any examples. This relies entirely on the model’s existing knowledge.

Few-shot prompting, on the other hand, provides one or more examples so the model can mimic the pattern you want.

Example: Few-Shot Translation

English: Hello → Spanish: Hola

English: How are you? → Spanish: ¿Cómo estás?

English: What is your name? →

Output: Spanish: ¿Cómo te llamas?

Why it works:

Few-shot prompts give the model contextual examples to imitate, improving accuracy and tone in structured tasks such as classification, translation, or summarization.

2. Role-Based Prompting

Assigning the model a clear persona helps it generate outputs in the correct tone, voice, and level of expertise. It immediately narrows the model’s focus and reduces vague or generic answers.

Example:

You are a digital marketing strategist. Write a 3-step plan to improve organic reach on Instagram for a travel blog.

Why it works:

Role framing tells the model how to think before it starts writing. It sharpens tone, improves reasoning, and produces more consistent results. From my own experience, this is one of the most effective techniques available, and I use it in almost every prompt.

3. Chain-of-Thought Reasoning

Chain of thought prompting encourages the model to reason step by step instead of jumping straight to an answer.

Example:

Let’s think through this step by step.

A train leaves at 8 a.m. traveling 80 km/h. Another leaves at 9 a.m. traveling 100 km/h. When do they meet?

Output:

The first train has a one-hour lead, covering 80 km.

The speed difference is 20 km/h, so it takes 4 hours to close the gap.

They meet at 1 p.m.

Why it works:

Requesting step by step reasoning reduces logical errors and makes the output easier to verify. This is especially useful for analytical or multi step tasks such as calculations, debugging, planning, and decision making. In my own prompting, adding a simple instruction like “think through this step by step” consistently improves both accuracy and clarity.

4. Format and Output Constraints

Models often generate strong content but inconsistent formatting. Adding clear format rules ensures the output fits your use case every time.

Example:

Summarize the following report in exactly three bullet points. Each bullet should be under fifteen words.

Output:

- Sales grew 14% in Q3 driven by EU demand.

- Product returns dropped 8% year-over-year.

- Forecast projects stable growth for Q4.

Why it works:

Format constraints improve readability, consistency, and compatibility with automation tools. This is especially helpful when you use AI for dashboards, structured documentation, data processing, or any workflow that requires predictable formatting. From my experience, adding simple format rules is one of the easiest ways to eliminate clutter and get clean, usable outputs.

5. Combining Techniques for Complex Tasks

Some of the highest quality outputs come from layering multiple prompt engineering techniques in one request. This approach gives the model clearer boundaries, a stronger reasoning path, and a precise target format.

Example:

You are a cybersecurity analyst.

Think step by step before writing your conclusion.

Provide the answer as a 3-bullet executive summary.

Why it works:

Layered prompting blends role framing, structured reasoning, and format constraints into a single instruction set. This reduces ambiguity, improves accuracy, and produces consistent results even for complex tasks. It mirrors how advanced users and professional AI teams work in 2025, since combining techniques gives far more control over tone, structure, and analytical depth. In practice, this is one of the fastest ways to upgrade the quality of any prompt, and I rely on it heavily when I need precise, decision-ready outputs.

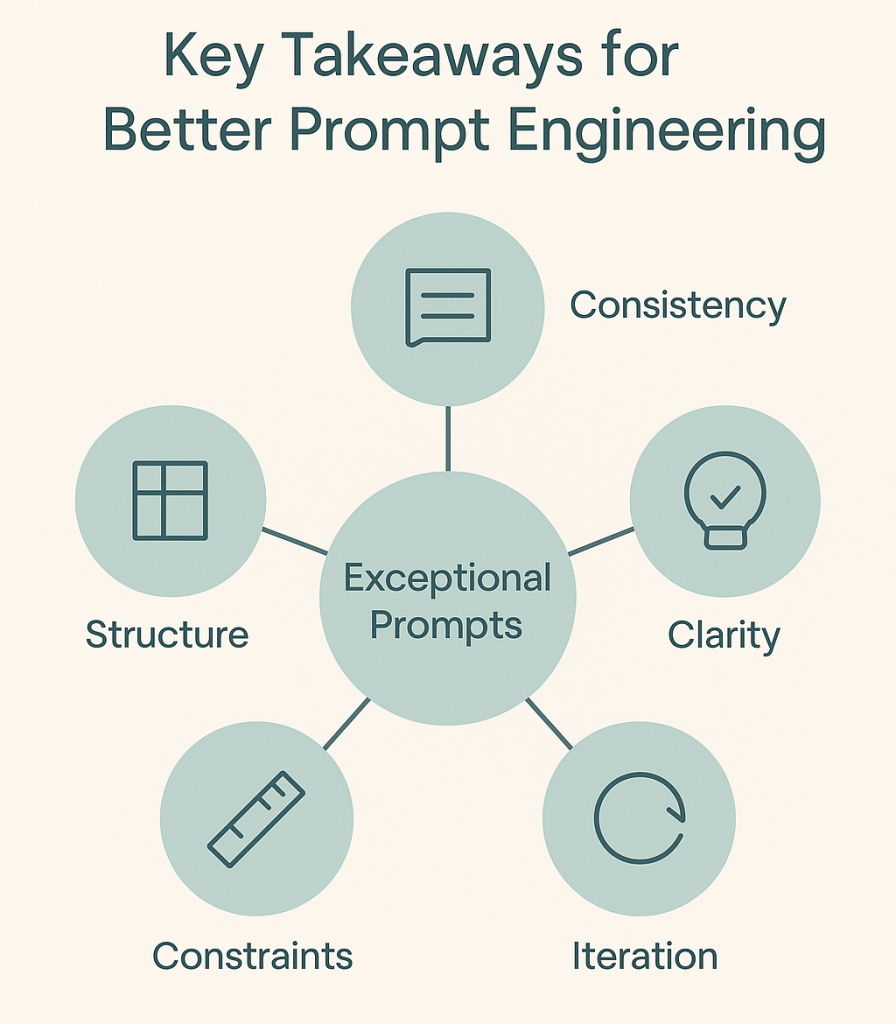

Key Takeaways for Better Prompt Engineering

Each of these techniques works on its own, but their real power shows when you combine them with intention. Clear roles, structured reasoning, and precise format rules create far more reliable outputs than any single method used in isolation. The users who get the best results are the ones who experiment regularly, document what works, and refine their prompts over time. The difference between a prompt that performs well and a prompt that performs exceptionally is usually structure, consistency, and deliberate iteration.

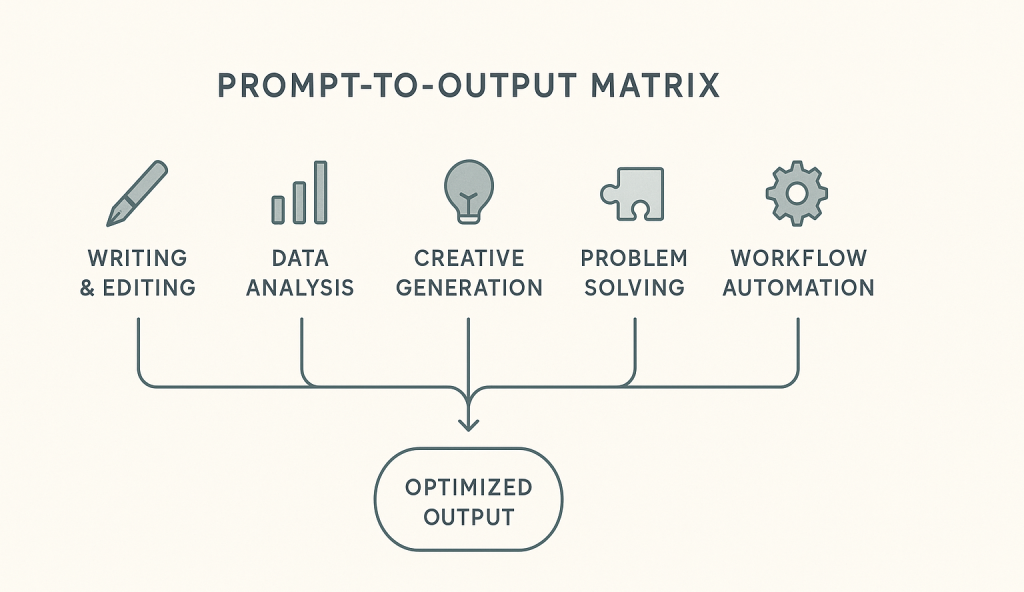

Real-World Prompt Engineering Examples

Theory matters, but prompt engineering only becomes useful when you can apply it to real tasks. The examples below show how each technique works in situations that professionals actually face in 2025. These scenarios reflect how writers, analysts, marketers, developers, and everyday users rely on structured prompting to produce clearer, more reliable outputs. Each example is concise, repeatable, and easy to adapt to your own workflow.

- Writing and Editing Prompts

- Data Analysis and Summarization Prompts

- Creative Generation Prompts

- Problem-Solving and Reasoning Prompts

- Workflow and Automation Prompts

1. Writing and Editing Prompts

Goal: Improve clarity, tone, and structure in professional writing.

Prompt Example:

You are an experienced editor. Rewrite the following paragraph to sound confident and persuasive for a business proposal while keeping it under 100 words.

Output Example:

Original: “We think our service could potentially help small companies improve their online visibility.”

Refined: “Our platform helps small businesses increase their online visibility through data-driven SEO strategies that deliver measurable results.”

Why It Works:

This combines role-based prompting (you are an editor) with format and tone constraints, ensuring consistent style and brevity without losing meaning.

For a deeper breakdown of how to improve the naturalness of AI-generated writing, see my guide on humanizing AI content.

2. Data Analysis and Summarization Prompts

Goal: Turn unstructured information into clear, actionable takeaways.

Prompt Example:

Analyze the meeting transcript below. List three main decisions, two open issues, and one action item for the next meeting. Present the result as bullet points.

Output Example:

- Approved the new marketing budget.

- Agreed to expand outreach to the DACH region.

- Decision pending on Q2 hiring plan.

- Outstanding issue: vendor contract review.

- Next step: finalize pricing model before Friday.

Why It Works:

The prompt defines structure and quantity, forcing the model to distill information accurately. It uses formatting constraints and context-specific framing, two of the most effective prompt engineering strategies in 2025.

If you are new to generative AI concepts, start with my Beginner’s Guide to Generative AI (2025) for context.

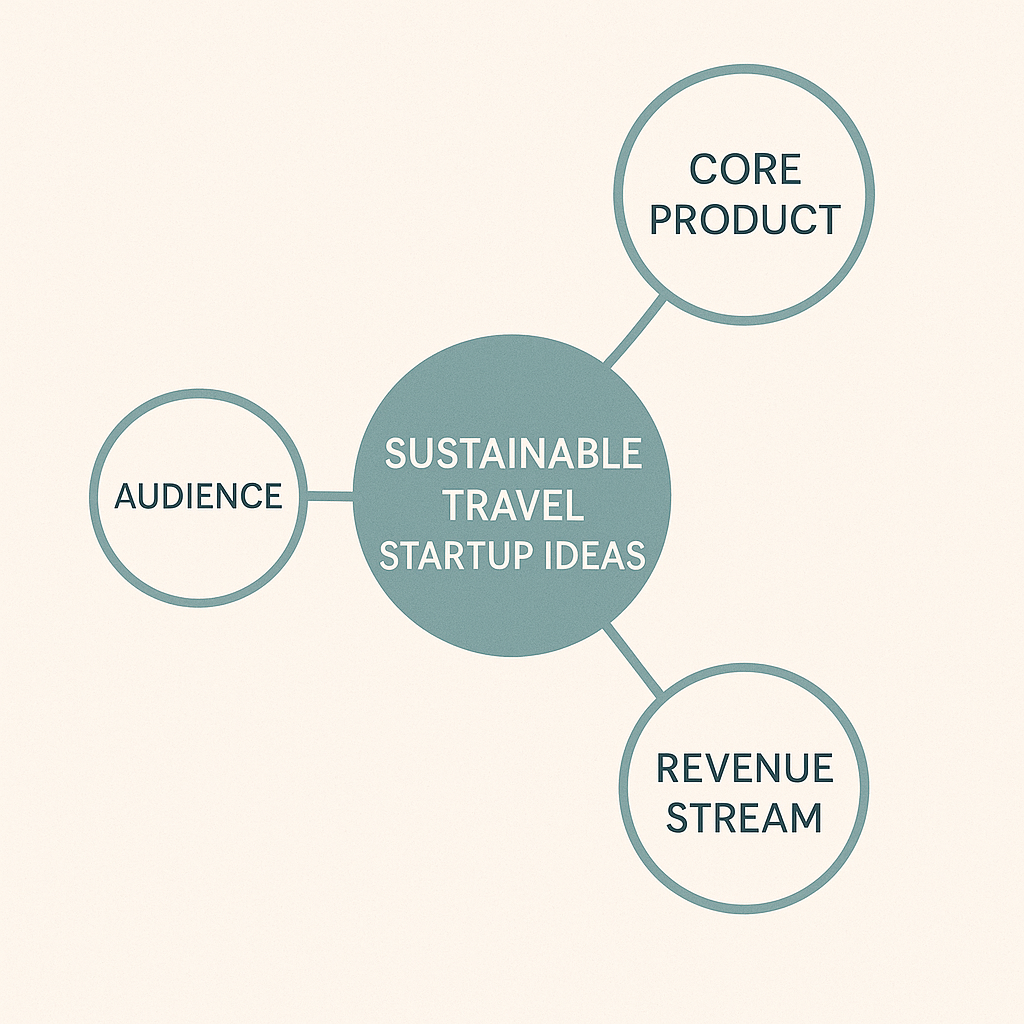

3. Creative Generation Prompts

Goal: Produce original ideas or content with defined focus and tone.

Prompt Example:

Generate three creative startup ideas focused on sustainable travel. For each, include the target audience, core product, and potential revenue stream.

Output Example:

- EcoHop – A carbon-neutral flight comparison app for eco-conscious travelers.

- GreenStay – Marketplace for verified sustainable hotels and hostels.

- ReTrail – Subscription-based hiking trips that fund local conservation projects.

Why It Works:

By narrowing the topic and defining deliverables, this prompt applies specificity and structured output, two principles that eliminate vague or repetitive results.

4. Problem-Solving and Reasoning Prompts

Goal: Improve accuracy in tasks that require logic and reasoning.

Prompt Example:

A train leaves City A at 10 a.m. traveling 80 km/h. Another leaves City B at 11 a.m. traveling 100 km/h toward City A. The distance between them is 420 km. Think step by step and calculate where they meet.

Output Example:

Train A travels for 1 hour before Train B departs, covering 80 km. Remaining distance: 340 km. Combined speed: 180 km/h. Time to meet: 340 ÷ 180 = 1.89 hours (≈1h53m). They meet about 222 km from City A.

Why It Works:

This is a clear demonstration of chain-of-thought prompting, where the model is guided to reason through each step instead of jumping straight to an answer.

If you want to explore how AI supports critical thinking and structured reasoning, read How to Use AI to Learn Faster (Without Losing Your Critical Thinking).

5. Workflow and Automation Prompts

Goal: Automate multi-step business communication tasks.

Prompt Example:

You are an operations manager. Summarize the following weekly report into three short paragraphs: (1) project progress, (2) blockers, and (3) next week’s priorities. Use a professional but conversational tone.

Output Example:

The marketing automation rollout is 85% complete, with testing underway. The data team flagged one integration issue with CRM sync, which is being reviewed. Next week’s focus is finalizing the launch checklist and preparing training material for the regional leads.

Why It Works:

It combines role assignment, format clarity, and tone control, all within one instruction. This structure is widely used in 2025 for operational reporting and internal AI workflows.

To see how similar techniques streamline automation and coding for non-developers, visit ChatGPT Coding for Beginners.

Summary Table

| Goal | Technique Used | Example Prompt | Why It Works |

|---|---|---|---|

| Writing & Editing | Role + Format | Rewrite a paragraph for clarity and tone | Enforces structure and control |

| Data Analysis | Format + Context | Summarize meeting transcript with decisions and issues | Forces structured reasoning |

| Creative Generation | Specificity + Context | Generate three sustainable travel startup ideas | Guides focus and avoids vagueness |

| Problem Solving | Chain-of-Thought | Calculate meeting point for two trains | Improves reasoning and accuracy |

| Workflow Automation | Role + Format | Summarize weekly report for executives | Automates professional communication |

These examples show that effective prompting is less about creativity and more about clarity of intent. When you define a role, provide relevant context, and specify structure, the model delivers results that are not only accurate but consistently aligned with your purpose.

Common Prompting Mistakes and How to Fix Them

Even with strong techniques in place, many people run into the same problems that weaken the quality, accuracy, or consistency of their results. Learning to avoid these mistakes is one of the fastest ways to improve your prompting skill and apply prompt engineering best practices 2025 more effectively.

The points below outline the errors that most often lead to poor outputs in ChatGPT and similar models, along with simple corrections that immediately improve performance.

| Mistake | Why It Happens | How to Fix It | Technique Involved |

|---|---|---|---|

| Too vague | Missing clarity and context | Add task and scope | Contextual framing |

| No output format | Model improvises structure | Define structure or length | Formatting control |

| Missing role | Tone mismatch | Assign a role or persona | Role-based prompting |

| Too many instructions | Confusion or partial results | Split into smaller steps | Prompt chaining |

| No iteration | Missed optimization | Refine after each output | Iterative prompting |

| Single-model testing | Poor transferability | Test across models | Cross-model validation |

Avoiding these mistakes leads to clearer, more accurate, and more consistent outputs. When you understand why these errors happen and how to correct them, you gain far more control over the model’s reasoning, tone, and structure. These fixes are practical, immediate improvements that help you get higher quality results across writing, analysis, problem solving, and everyday AI tasks.

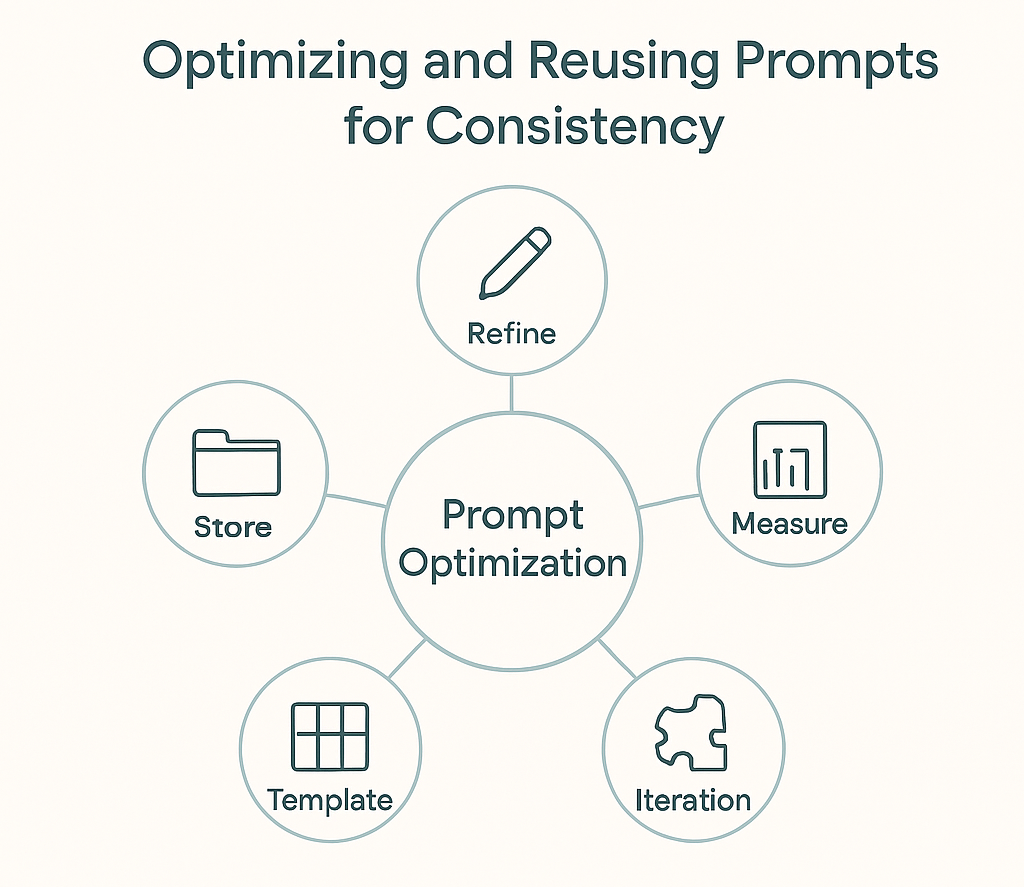

Optimizing and Reusing Prompts for Consistency

Once you know how to write effective prompts, the real gains come from refining and reusing them. In 2025, the people who work with AI at a high level treat every successful prompt as a reusable asset. They document what worked, simplify the structure, and build variations they can rely on for different tasks.

This habit saves time, reduces errors, and produces more predictable outputs. It also strengthens your overall prompt engineering skill because you learn which patterns consistently improve clarity, reasoning, and tone.

- Refine Prompts Like Drafts

- Store and Categorize Prompts

- Measure Output Quality

- Use Templates for Repetition

- Review and Refine Prompt Efficiency

1. Refine Prompts Like Drafts

Good prompts rarely work perfectly the first time. Think of each version as a new draft you improve based on results. Make small adjustments to your wording, structure, or instructions, then test again to see what changes the output. Over time, these refinements lead to more reliable and accurate responses.

Example:

V1: “Summarize this article.”

V2: “Summarize this article in three bullet points: (1) Key idea, (2) Data point, (3) Practical implication.”

V3: “You are a research analyst. Summarize this article in three bullet points using academic language and include the title of the source.”

Tracking versions helps identify patterns and shows what consistently produces the best results.

2. Store and Categorize Prompts

Organizing your prompts is essential for consistency. Group them by task type such as writing, brainstorming, data analysis, communication, or coding, and tag them by tone or format.

Example categories you might use:

Writing prompts: editing, summarizing, rewriting

Analysis prompts: meeting summaries, risk assessments, data extraction

Creative prompts: idea generation, concept expansion

Communication prompts: emails, reports, explanations

Coding prompts: bug fixes, code translation, documentation

A clear prompt library helps you retrieve and reuse strong prompts quickly, especially for recurring projects or workflows.

3. Measure Output Quality

The only way to improve prompt performance is by evaluating results.

Rate each output based on three core metrics:

| Metric | Question to Ask | Goal |

|---|---|---|

| Accuracy | Did the model complete the task correctly? | Eliminate misinterpretation |

| Clarity | Is the response readable and relevant? | Improve communication quality |

| Efficiency | Did it reduce time or effort? | Maximize productivity |

Tracking these results helps you refine prompt design logically instead of relying on guesswork.

4. Use Templates for Repetition

If you repeat the same type of task, turn that structure into a prompt template.

Example Template:

You are a {role}. Analyze the following {input type} and provide {output format} including {key points}.

Templates reduce friction, keep tone consistent, and make collaboration easier if you share your process with others.

5. Review and Refine Prompt Efficiency

AI models evolve rapidly. A prompt that worked perfectly six months ago might now produce weaker results.

Schedule periodic reviews to test your top prompts with the latest LLM versions. Adjust for new features like structured outputs or function calling to maintain accuracy and efficiency.

Build a Prompt System, Not a Collection

The most effective prompt engineers don’t simply collect prompts, they build systems. Each prompt becomes part of a repeatable framework that’s tested, refined, and improved over time.

This system-driven approach defines prompt engineering best practices in 2025, transforming isolated experiments into a reliable creative workflow that improves precision, scalability, and long-term results.

Staying Current with AI and Prompt Engineering Trends (2025 and Beyond)

Prompt engineering continues to evolve alongside rapid advances in large language models. Strategies that worked in 2024 are already being replaced by more structured, adaptive, and automated approaches in 2025.

The most effective professionals treat AI as a moving target, not a static tool. Staying current with model updates, new prompting frameworks, and emerging best practices ensures your methods remain accurate, efficient, and competitive. Continuous learning and experimentation are what keep prompt engineers ahead of the curve.

- Follow Model Updates and Release Notes

- Experiment Across Models

- Learn from Real-World Use Cases

- Keep a Continuous Improvement Loop

- Stay Curious About AI Research

1. Follow Model Updates and Release Notes

Every major model update, whether from ChatGPT, Gemini, or Claude, changes how prompts are processed. Even small shifts in reasoning depth, context handling, or token limits can noticeably affect output quality.

Make a habit of reviewing official release notes and changelogs. They often highlight new capabilities such as structured outputs, improved reasoning chains, or multimodal understanding. Updating your existing prompts to align with these changes can lead to faster, more accurate, and contextually aware results.

As of this writing, ChatGPT 5.1 introduced several improvements in reasoning and system instruction handling, underscoring how quickly the field evolves.

2. Experiment Across Models

Relying on a single model limits your understanding of how prompts truly behave. A prompt that performs perfectly in ChatGPT may generate very different results in Claude or Gemini, since each model processes context, temperature, and token weighting in its own way.

Testing your prompts across multiple models helps you build more flexible and transferable frameworks. It also sharpens your intuition for how AI systems interpret instructions — a valuable skill for anyone working in AI-powered content creation, marketing, education, or automation.

For deeper insight into model comparisons and benchmarks, see Stanford’s HELM project, which regularly evaluates the reasoning and performance differences between leading LLMs.

3. Learn from Real-World Use Cases

Prompt engineering delivers the most value when it’s applied to real-world challenges. Pay attention to how AI is being used in your field, from lesson planning and marketing automation to UX design and data-driven decision-making.

Study successful prompts in context, then reverse-engineer their structure, tone, and constraints. This approach helps you uncover what actually drives performance and reliability in different professional settings. The most effective prompt engineers learn by testing, observing outcomes, and refining patterns based on evidence, not theory.

For examples of real-world AI adoption and case studies, explore MIT Technology Review’s AI coverage.

4. Keep a Continuous Improvement Loop

Prompt engineering is an evolving skill, not a finished product. Your best prompts today might underperform six months from now as AI models and interfaces evolve. Set aside time regularly to review your prompt library, evaluate performance, and refine templates for clarity, structure, and reasoning.

This continuous improvement loop keeps your workflow efficient and aligned with the latest model capabilities. The most effective AI professionals treat prompt refinement like professional development, a skill that compounds over time through experimentation and review.

5. Stay Curious About AI Research

The latest breakthroughs in prompting often originate from academic papers or open-source projects.

Concepts like self-consistency prompting, adaptive context control, or prompt compression emerged from research long before reaching mainstream tools. Even brief exposure to these studies can spark ideas that make your own workflows more sophisticated and reliable.

Final Thoughts

Prompt engineering in 2025 has evolved far beyond clever phrasing. It is now a structured process rooted in clarity, context, and critical thinking. The best results come from users who treat prompting as a system, one that can be tested, refined, and improved over time.

Whether you are using ChatGPT to write, analyze, code, or create, effective prompts rely on understanding how the model interprets your input and how small changes shape the outcome. Professionals who document what works, measure quality, and iterate consistently are the ones turning AI tools into genuine productivity multipliers.

In the end, the real value of prompt engineering lies in mastery, not memorization. Keep refining, experimenting, and adapting your methods as models evolve. The more intentional your process becomes, the more control, creativity, and consistency you will achieve, and that is the foundation of prompt engineering best practices in 2025.

If you want to keep improving your results with ChatGPT and other AI tools, check out all my AI guides for more practical techniques, real-world examples, and hands-on frameworks for better prompting.

Frequently Asked Questions (FAQ)

What is prompt engineering in simple terms?

Prompt engineering is the practice of writing clear, structured instructions that guide AI models like ChatGPT to produce better results. It’s about understanding how language affects model behavior and designing prompts that balance clarity, context, and creativity.

Why is prompt engineering important in 2025?

As AI tools become more advanced, the quality of their responses depends almost entirely on how you communicate with them. In 2025, prompt engineering has evolved from a niche skill to a professional discipline — one that improves accuracy, creativity, and consistency in every AI-powered task.

What are some advanced prompt engineering techniques?

Some of the most effective techniques include few-shot prompting, chain-of-thought reasoning, role-based prompting, format constraints, and self-consistency testing. These methods guide the model to think through problems logically and produce structured, reliable answers.

How do I know if my prompt is good?

A good prompt gives the model a clear task, enough context to complete it accurately, and a defined output structure. If you can predict what the response will look like before running it, your prompt is well-designed.

How can I reuse prompts effectively?

Keep a personal prompt library where you store, tag, and refine your best-performing prompts. Reusing proven structures saves time and ensures consistent quality across projects. Treat each successful prompt as a system that can evolve with practice.

Can prompt engineering be automated?

Partially. Some tools now help automate prompt testing, formatting, and evaluation, but human understanding remains essential. Automation can support consistency, yet creativity and reasoning still depend on human insight.

Is prompt engineering only for ChatGPT?

No. While many examples focus on ChatGPT, the same principles apply to other LLMs like Claude, Gemini, and open-source models. Once you understand how prompts influence one model, adapting to others becomes much easier.

How can I stay updated on prompt engineering trends?

Stay current by reading new research, testing prompts across multiple models, and following official AI documentation. The most effective learners treat prompt engineering as an evolving practice, not a static skill.